Claude vs. the Pentagon

A full timeline of Anthropic's complicated relationship with the Department of War

Over the past 16 months, Anthropic went from Pentagon darling to being labeled a “supply chain risk” (a designation previously reserved for foreign adversaries). Along the way, OpenAI swooped in with a deal its own CEO called “opportunistic and sloppy,” millions of users deleted ChatGPT in protest, and the whole thing ended up on the cover of TIME.

Here’s how we got here.

November 7, 2024 - Anthropic Enters the Defense World

Anthropic, Palantir Technologies, and Amazon Web Services announce a partnership to bring Claude AI models into U.S. intelligence and defense agencies. Claude is deployed within Palantir’s Impact Level 6 environment, a classified system handling data up to the “secret” level. It’s one of the first times a frontier AI model operates inside classified government networks.

The announcement raises concerns. Anthropic has built its brand on being the “safety-first” AI lab. Critics immediately question whether defense contracts square with that identity.

May 22, 2025 - Anthropic Draws the CBRN Line

Anthropic releases Claude Opus 4 under its strictest safety measures yet, activating AI Safety Level 3 (ASL-3) for the first time. Internal testing showed Opus 4 could meaningfully help someone with a basic STEM background produce biological, chemical, radiological, or nuclear weapons. Chief scientist Jared Kaplan put it plainly: “you could try to synthesize something like COVID or a more dangerous version of the flu, and basically, our modeling suggests that this might be possible.”

ASL-3 is the threshold in Anthropic’s Responsible Scaling Policy reserved for models that could “substantially increase” weapons access for non-experts.

July 14, 2025 - The $800 Million Pentagon AI Bet

The Pentagon’s Chief Digital and Artificial Intelligence Office (CDAO) awards four $200 million contracts for “agentic AI” prototype development on systems that can not only generate plans but take autonomous action on them. The winners: Anthropic, Google, OpenAI, and xAI.

Anthropic’s press release is bullish. The company touts its work with the National Laboratories, its Palantir partnership on classified networks, and custom “Claude Gov” models built specifically for national security customers. Head of Public Sector Thiyagu Ramasamy calls the award “a new chapter in Anthropic’s commitment to supporting U.S. national security.”

January 9, 2026 - Hegseth’s “AI-First” War Machine

Defense Secretary Pete Hegseth releases the Department of War’s AI Acceleration Strategy. The document is aggressive, ambitious, and unapologetically hawkish. It mandates that the military become an “AI-first warfighting force” and launches seven “Pace-Setting Projects,” including Swarm Forge (pairing elite combat units with tech innovators to test AI-enabled tactics), Agent Network (AI for “campaign planning to kill chain execution”), and Open Arsenal (turning intelligence into weapons “in hours, not years”).

The strategy does address “responsible AI,” just not in the way most people mean it. The memo redefines the term to mean AI free from “ideological tuning,” with no usage policy constraints beyond what statute requires. The document explicitly signals that future contracts will include “any lawful use” language (remember this for later), foreclosing the kind of safety carveouts Anthropic had been negotiating.

Three days later, at a speech at SpaceX’s Starbase facility in South Texas alongside Elon Musk, Hegseth makes his position unmistakable:

“Department of War AI will not be woke. It will work for us. We’re building war-ready weapons and systems, not chatbots for an Ivy League faculty lounge.”

January 3, 2026 - Claude Goes to War

U.S. special operations forces raid Caracas, capturing Venezuelan President Nicolás Maduro and his wife Cilia Flores, who are flown to New York to face narcoterrorism charges. Venezuelan officials report a death toll of roughly 100; Cuba separately confirms 32 of its own soldiers and intelligence personnel killed. Reports of Claude’s involvement don’t surface until mid-February, when the Wall Street Journal reveals the military deployed Claude through Anthropic’s Palantir integration during the active operation, not just in planning. The exact role remains unconfirmed.

A senior Anthropic executive, during a routine Palantir meeting, questions how Claude had been used in the operation. The Pentagon doesn’t like this. A senior administration official tells Axios the Pentagon is reevaluating its partnership with Anthropic, and officials begin weighing cancellation of a contract worth up to $200 million. The relationship begins to sour.

February 2026 - Anthropic Drops Its Safety Pledge

Anthropic guts the central commitment of its Responsible Scaling Policy (RSP). Since 2023, the company had pledged to pause training more powerful AI models if their capabilities outpaced its ability to implement adequate safety measures. RSP v3.0, removed that hard limit entirely.

Chief Science Officer Jared Kaplan tells TIME:

“We felt that it wouldn’t actually help anyone for us to stop training AI models. We didn’t really feel, with the rapid advance of AI, that it made sense for us to make unilateral commitments … if competitors are blazing ahead.”

Chris Painter, director of policy at METR (a nonprofit focused on evaluating AI models for risky behavior) reviews an early draft of the policy with Anthropic’s permission, stating:

“This is more evidence that society is not prepared for the potential catastrophic risks posed by AI.”

Painter calls the shift a sign that Anthropic “believes it needs to shift into triage mode with its safety plans, because methods to assess and mitigate risk are not keeping up with the pace of capabilities.”

February 24, 2026 - The Ultimatum

Defense Secretary Pete Hegseth summons Dario Amodei to the Pentagon. Anthropic’s post-meeting statement described the exchange as appreciative; Pentagon officials had characterized it beforehand as a “shit-or-get-off-the-pot meeting.” The substance matched the latter framing. Hegseth gives Amodei a deadline: 5:01 p.m. on Friday, February 27. Allow unrestricted military use of Claude for “all legal purposes,” or face the consequences.

The threatened consequences are severe:

Cancellation of Anthropic’s $200 million contract

Invocation of the Defense Production Act to compel Anthropic’s cooperation

Designation as a “supply chain risk” (effectively a government blacklist)

The move harkens back to an earlier quote from Hegseth:

“We will not employ AI models that won't allow you to fight wars.”

February 26, 2026 - “We Cannot in Good Conscience Accede”

With one day left before the deadline, Dario Amodei publishes a statement on Anthropic’s website. It’s a remarkable document; equal parts national security argument, democratic values argument, and moral argument.

He leads by establishing Anthropic’s defense bona fides:

“I believe deeply in the existential importance of using AI to defend the United States and other democracies, and to defeat our autocratic adversaries.”

Then he names the two redlines.

On fully autonomous weapons:

“Today, frontier AI systems are simply not reliable enough to power fully autonomous weapons. We will not knowingly provide a product that puts America’s warfighters and civilians at risk.”

On mass domestic surveillance:

“Using these systems for mass domestic surveillance is incompatible with democratic values. AI-driven mass surveillance presents serious, novel risks to our fundamental liberties.”

He notes that the Pentagon’s threats are “inherently contradictory: one labels us a security risk; the other labels Claude as essential to national security.”

And then, the line heard around the industry:

“Regardless, these threats do not change our position: we cannot in good conscience accede to their request.”

In a CBS News interview Friday night, hours after Trump ordered federal agencies to cut off Anthropic, Amodei doubles down:

“Our position is clear. We have these two red lines. We’ve had them from day one. We are still advocating for those red lines. We’re not going to move on those red lines.”

“We don’t want to sell something that we don’t think is reliable, and we don’t want to sell something that could get our own people killed, or that could get innocent people killed.”

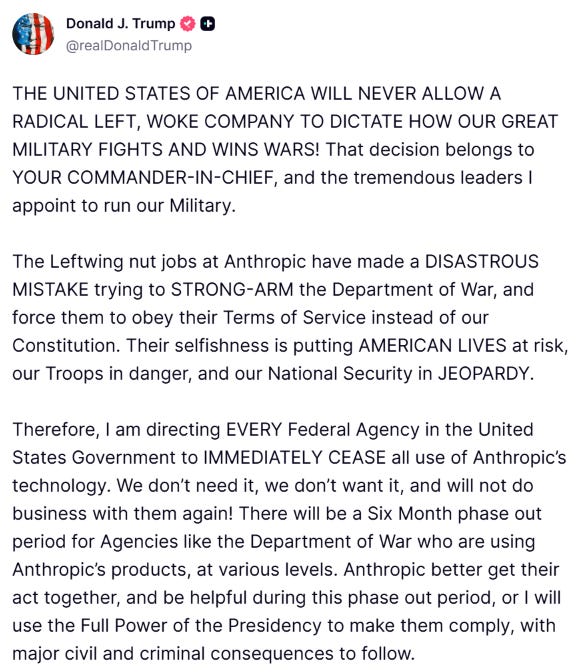

February 27, 2026 - The Hammer Falls

The deadline passes. Trump posts to Truth Social ordering every federal agency to “immediately cease” all use of Anthropic’s technology, with a six-month phase-out window for agencies already running it. That same day, Defense Secretary Pete Hegseth announces he’s directing the Pentagon to designate Anthropic a “Supply-Chain Risk to National Security,” the first time that label has ever been applied to an American company.

Anthropic’s formal response is measured but pointed. The company calls the moves “unprecedented and unlawful,” arguing the government is retaliating against the company for First Amendment-protected speech.

Despite the ban, Anthropic’s models remain in active use supporting U.S. military operations in Iran, even after the blacklisting. Until that week, Anthropic was the only leading AI company cleared to offer services on classified networks, meaning there was no ready replacement, and the military was operationally dependent on a company it had just expelled.

February 27, 2026 - OpenAI Steps In

Hours after Anthropic’s blacklisting, OpenAI CEO Sam Altman posted to X that his company had reached an agreement with the Department of War to deploy its models on the DoD’s classified network.

The deal’s framing deserves scrutiny. OpenAI accepted the “all lawful uses” contract language the Pentagon had demanded (the same language Anthropic refused) but required the DoW to define specific legal constraints on surveillance and autonomous weapons directly in the contract text. Analysts flagged that the wording appeared far softer than Anthropic’s terms, with provisions on surveillance potentially leaving open lawful mass data processing.

The timing was hard to overlook. OpenAI had its deal ready to announce within hours of a competitor’s government exile, and the gap it stepped into was both strategically and operationally significant.

February 28-March 2, 2026 - The Revolt

The public response is swift and furious. By Saturday, ChatGPT uninstalls surge 295% in a single day. Downloads drop 13%. One-star reviews spike 775%. Five-star reviews fall by half.

The organized boycott, “QuitGPT,” claims 1.5 million people had taken action within days, growing to over 2.5 million supporters by the time protesters gathered outside OpenAI’s San Francisco headquarters on March 3. The campaign’s website pulls no punches: it cites killer robots, mass surveillance of Americans, and OpenAI leadership’s ties to the Trump administration.

Meanwhile, Claude’s downloads jump 37-51% and the app rises to #1 on the U.S. App Store, a position it holds for days. By early March, Similarweb estimates Claude’s U.S. downloads are running roughly 20 times higher than January levels (though the firm notes the political controversy may not be the only factor).

Anthropic doesn’t have to say a word. The market speaks for it.

March 3, 2026 - Altman: “Opportunistic and Sloppy”

Facing a user revolt, Sam Altman publishes an internal memo (then reposts it on X) in which he acknowledges that OpenAI botched the optics:

“One thing I think I did wrong: we shouldn't have rushed to get this out on Friday. The issues are super complex, and demand clear communication. We were genuinely trying to de-escalate things and avoid a much worse outcome, but I think it just looked opportunistic and sloppy. Good learning experience for me as we face higher-stakes decisions in the future.”

OpenAI then moved to amend the contract, adding explicit language that “the AI system shall not be intentionally used for domestic surveillance of U.S. persons and nationals.” Altman also stated that intelligence agencies within the Department of War (including the NSA, cited as an example) would be barred from using OpenAI’s services under the existing agreement, with any such use requiring a separate contract modification.

The walkback lands harder given OpenAI’s original announcement, which had claimed the deal contained “more guardrails than any previous agreement for classified AI deployments, including Anthropic’s.” It’s hard to not see this as either a severe miscommunication or an outright lie, given that the changes only occurred once observers noticed the original language lacked the explicit surveillance prohibitions Anthropic had fought to enshrine and been blacklisted for demanding.

March 4, 2026 - Amodei’s Leaked Memo: “Straight Up Lies”

An internal Anthropic memo from Amodei to staff (sent the day negotiations with the Pentagon collapsed and then leaked to The Information) is 1,600 words of barely contained fury.

Amodei calls OpenAI’s messaging “mendacious” and describes Altman’s public statements as “straight up lies,” accusing him of falsely “presenting himself as a peacemaker and dealmaker.” On OpenAI’s safety provisions specifically, he writes that approaches like Palantir’s classifier system are “maybe 20% real and 80% safety theater.”

Amodei argues it’s relatively easy to detect if a model is being used to conduct cyberattacks from inputs and outputs, but nearly impossible to determine the nature and context of those operations, which is the kind of distinction that matters for military applications. The Palantir safety layer, in his read, was never designed to solve that problem, it was designed to give employees something that looks like a solution.

On the positioning, he’s blunt: the episode revealed “who they really are.”

March 5, 2026 - “Supply Chain Risk” Goes Official

The Pentagon formally notifies Anthropic that the company and its products pose a risk to the U.S. supply chain. Anthropic is the only American company ever to be publicly named a supply chain risk, as the designation has traditionally been used against foreign adversaries. The label requires defense vendors and contractors to certify that they don’t use Anthropic’s models in their work with the Pentagon. Anthropic disputes the scope, arguing the relevant statute limits the designation to direct use within specific DoW contracts rather than any contractor that has ever touched Claude.

Meanwhile, two people with knowledge of the matter confirmed the military is using AI systems from Palantir to identify potential targets in ongoing attacks on Iran, with that software relying in part on Anthropic’s Claude. According to reporting from the Wall Street Journal and the New York Times, the U.S. and Israel adjusted the timing of their attack in part to take advantage of new CIA intelligence about a gathering of senior Iranian officials at a leadership compound in central Tehran where Khamenei would be present. Claude’s role in that operation was broader than target timing; Claude is used to help military analysts sort through intelligence and does not directly provide targeting advice, according to a person with knowledge of Anthropic’s work with the Defense Department.

The Pentagon continues using technology from a company it just labeled a national security risk.

March 9, 2026 — Anthropic Sues; TIME Covers the Backlash

Anthropic files two federal lawsuits on March 9 challenging the supply chain risk designation, one in the U.S. District Court for the Northern District of California, a second in the D.C. Circuit Court of Appeals. The California suit, 48 pages, argues the designation violated the company’s First Amendment rights, exceeded the statutory authority Congress granted for supply chain risk law, and denied Anthropic adequate due process. The company’s position is that the government doesn’t have to buy its products or agree with its views, but it can’t use the machinery of the state to punish protected speech about AI safety.

Two days later, TIME published "How Anthropic Became the Most Disruptive Company in the World." The piece traces Anthropic's unlikely transformation from the AI industry's designated responsible adult into its most disruptive force. Claude Code's annualized revenue topped $1 billion by end of 2025 and had more than doubled to $2.5 billion by February, putting Anthropic on track to surpass OpenAI's revenue by end of 2026 according to estimates from Epoch and Semianalysis.

Political scientist Rebecca Lissner, whose work Jacobs cited in that same piece, had warned in a prior TIME article:

“If 2026 proves to be the year of AI takeoff, the concerns of numerous Americans about its effects on our economy, politics, and human relationships could coalesce into a potent populist political force.”

March 11, 2026 - An Oppenheimer Moment

The Atlantic publishes “Dario Amodei’s Oppenheimer Moment“ by Ross Andersen.

The piece draws a direct parallel between Amodei and the nuclear physicists of the mid-20th century (Szilard, Teller, Oppenheimer) who dreamed of utopian applications for their work and wound up with no say in how it was used. Andersen connects Amodei’s essay “Machines of Loving Grace,” which predicts AI compressing decades of scientific progress, curing cancer, doubling human lifespans by 2035, to the same strain of techno-optimism that once imagined atomic-powered cars and harbors blasted out of Alaskan coastline by nuclear detonations.

Then the thesis:

“Implicit in this vision is the hope that in the end, when the chips are down, Amodei, or someone very much like him, will have some say in how AI will be used. But if Anthropic’s recent experience with the Pentagon is any indication, that likely won’t be his decision to make.”

Andersen closes on the line that sticks:

“After the builders of the atomic bomb finished their work in the New Mexico desert, they very quickly learned how little say they would have in its use. The weapons were driven away on trucks, and in the weeks afterward, no one called the scientists to get a green light for the bombing of Hiroshima or Nagasaki... Their leverage was front-loaded: They could choose to create their terrible weapons or not, but once they’d successfully tested even one, they’d already forfeited it.”

Where Things Stand

As of this writing, here’s the scoreboard:

Anthropic has lost its Pentagon contract, been labeled a supply chain risk, and is suing the U.S. government. Its safety-first brand has never been more popular with consumers but its government business is in ruins. And it has quietly dropped the signature safety pledge that defined its corporate identity.

OpenAI has the Pentagon deal, but after a humiliating walkback and a user revolt, it added surveillance restrictions it initially left out. Its CEO admitted the whole thing looked “opportunistic and sloppy.” Millions deleted its app.

The Pentagon is using AI in active military operations (including airstrikes on Iran) while simultaneously banning the company whose AI it’s actually running on some classified networks. It labeled an American company a security risk using a tool designed for foreign adversaries, and did so in apparent retaliation for that company exercising its First Amendment rights.

The public is angry, confused, and increasingly organized.

Somewhere in the background, the AI models keep getting more powerful, the wars keep escalating, and the question that the Atlantic raised hangs over all of it like a mushroom cloud:

Once you build the thing, do you still get a say in what it does?