Why OpenAI's new o3 model is a big deal

What the reasoning model's sudden upgrade means for 2025 and beyond

OpenAI's shipmas event concluded with the grand unveiling of o3, a model family designed to outthink and outperform. Skipping o2 due to trademark tangles with British telecom giant O2, o3 and its counterpart, o3-mini, emerge as successors to the o1 reasoning model.

While o1 introduced reasoning capabilities, o3 refines and amplifies them. These models aren't widely accessible yet, reserved for safety researchers who brave the preview launch. OpenAI's cautious approach aligns with CEO Sam Altman's vision of a federal testing framework to oversee the deployment of such potent technologies (though implementation of this in 2025 feels unlikely).

o3's general availability remains a closely guarded secret, teased to emerge post-January 2025.

Inside the machine

At the heart of o3 lies an enhanced reasoning mechanism. Unlike conventional AI, o3 engages in self-verification, fact-checking its responses to minimize errors that often plague lesser models. This introspective process, however, introduces latency. o3 takes seconds to minutes longer to deliver answers, a trade-off for increased reliability in complex domains like physics, science, and mathematics.

o3's architecture, like o1’s before it, incorporates a "private chain of thought," allowing it to deliberate and strategize before responding. Given a prompt, o3 doesn't rush. It pauses, explores related queries, and meticulously crafts its reasoning pathway. This method culminates in a summarized, accurate response, a testament to its advanced cognitive framework.

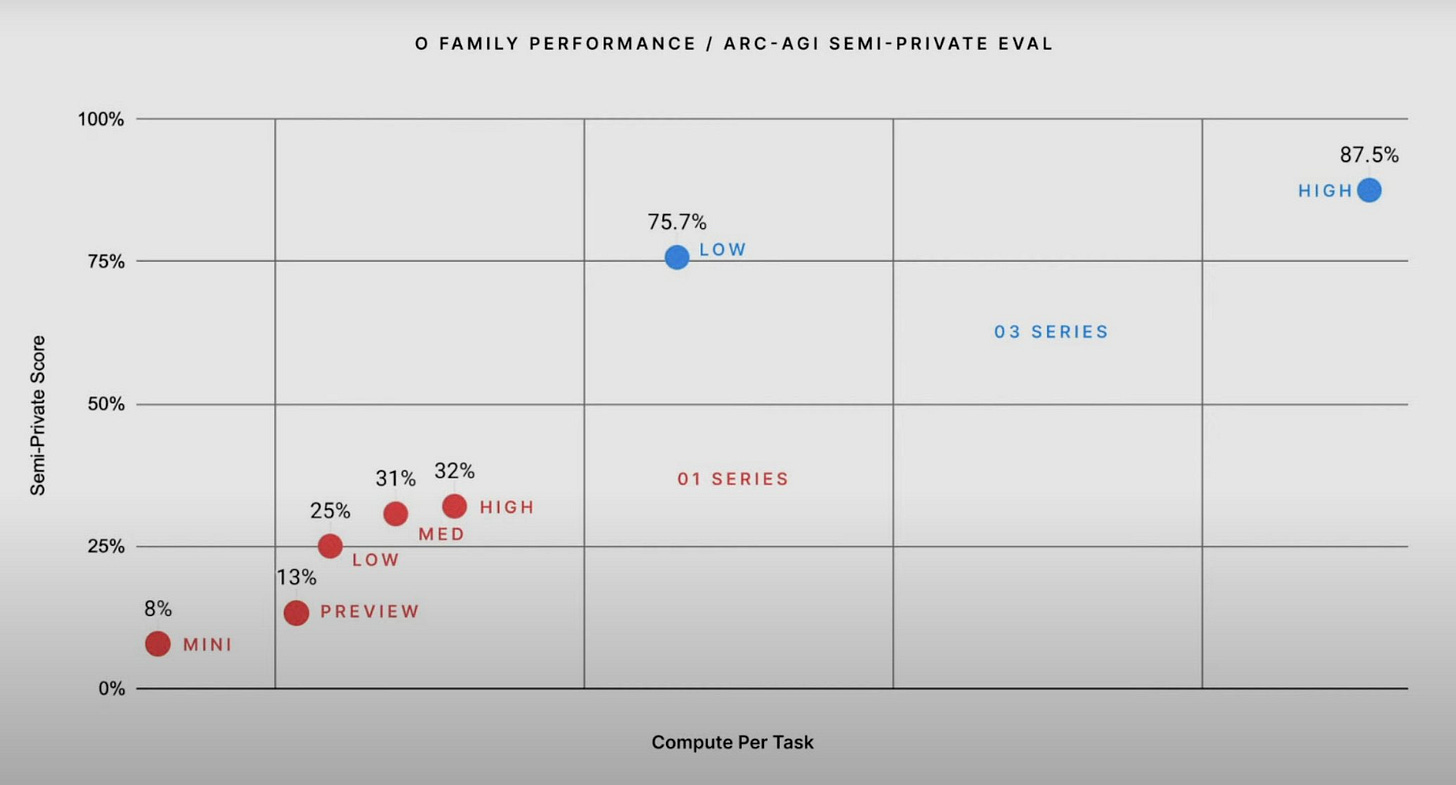

A standout feature of o3 is its adjustable reasoning time. Users can set the model to low, medium, or high thinking time. More time equates to deeper analysis and superior performance, providing flexibility based on task complexity and required precision.

o3's benchmarks

The true testament to o3's prowess lies in its benchmark performances. On the ARC-AGI Public Benchmark, o3 scores a remarkable 75.7%, eclipsing its predecessor and edging closer to human-level intelligence, defined at 85%. In high-compute mode, o3 achieves an impressive 87.5%, surpassing human performance and signaling a potential breakthrough toward AGI.

Beyond ARC-AGI, o3 dominates other evaluations. It outperforms o1 by 22.8 percentage points on SWE-Bench Verified and achieves a Codeforces rating of 2727, besting even OpenAI's chief scientist. In the AIME 2024 assessment, o3 scores 96.7%, missing only one question, and attains 87.7% on GPQA Diamond. On EpochAI’s Frontier Math, o3 solves 25.2% of problems, a feat unmatched by any other model, which maxes out at 2%.

These achievements, while promising, await external validation. OpenAI's internal evaluations showcase o3's capabilities, but the broader AI community will soon test its limits.

AGI on the horizon?

Artificial General Intelligence — an AI that matches or surpasses human intelligence across all tasks — has long been the Holy Grail of AI research. o3's advancements reignite debates about AGI's imminence. OpenAI defines AGI as “highly autonomous systems outperforming humans in most economically valuable work”. Achieving this milestone would redefine industries, economies, and societal structures.

o3's performance on ARC-AGI suggests OpenAI edges closer to this definition. A score of 87.5% in high-compute mode surpasses the human benchmark, hinting at AGI-like capabilities. However, AGI's realization isn't solely about benchmark scores. It encompasses adaptability, understanding, and the ability to generalize knowledge across disparate domains; a complicated challenge that o3 begins to address but doesn't fully meet.

Should o3 or its successors claim AGI status in 2025, regulatory frameworks, ethical considerations, and societal impacts will demand immediate attention. OpenAI's insistence on a federal testing framework underscores the gravity of such advancements, ensuring that AGI's deployment aligns with safety and ethical standards.

Rivalries in the reasoning revolution

OpenAI's o3 release isn't an isolated event. The success of reasoning models has spurred a surge of similar developments across the AI landscape. Google, DeepSeek, Alibaba's Qwen team—each emerges with their reasoning models, challenging OpenAI's dominance. This may signifies a paradigm shift from brute-force scaling to nuanced reasoning enhancements in AI development.

Reasoning models, while promising, grapple with challenges. They demand substantial computational resources, driving up costs and limiting accessibility (a problem we haven’t solved yet even with pre-reasoning models). Moreover, sustaining performance improvements remains uncertain.

Are reasoning models a definitive path to superior AI, or do they represent a post-training trend with diminishing returns?

OpenAI's o3 isn't merely a new model; it's their statement of intent. It signifies a commitment to continue advancing the “reasoning” approach to AI models, possibly inching closer to the dream of AGI.

How will 2025 receive this foreign visitor?