Your brain on ChatGPT

Cognitive debt in the age of AI and how to stay solvent

Artificial intelligence tools like OpenAI’s ChatGPT have rapidly become embedded in how we learn and work, but could relying on them come at a cognitive cost? This month, a study from MIT’s Media Lab set out to investigate how using ChatGPT might affect our brains, especially during learning tasks. The researchers divided 54 participants (aged 18-39) into three groups and asked them to write essays under three different conditions:

Using ChatGPT to assist their writing

Using a traditional search engine (Google)

Using no tools at all (just their own noggins)

Maybe unsurprisingly, they found that the brains of people using ChatGPT showed significantly less activity and engagement than those working unaided.

Should we all be worried about losing our (figurative) minds?

The study and its findings

The study painted a detailed (and maybe worrying) picture of how reliance on ChatGPT can lead to a less active brain during a complex task like essay writing. This probably sounds obvious, but it points to some probable issues with the tool long term.

Here are some of the major points that emerged:

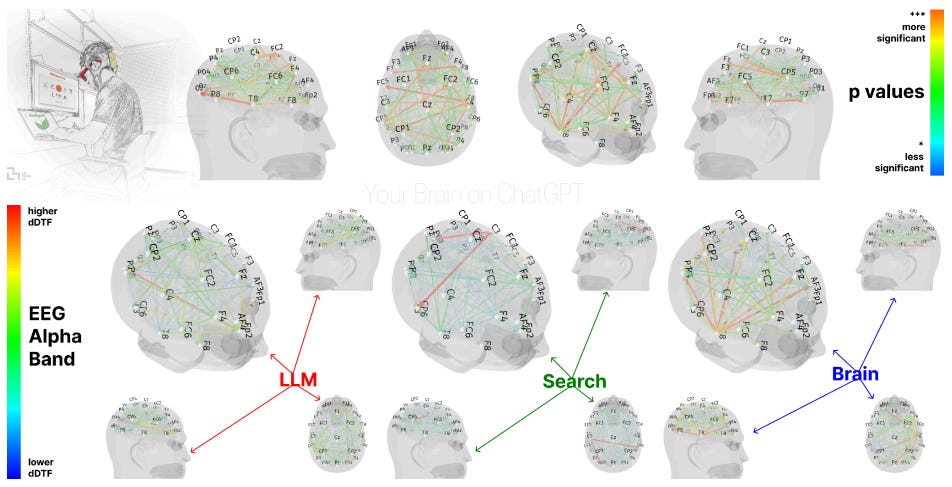

Weakened neural activity with AI assistance. EEG measurements revealed that participants who wrote with ChatGPT’s help had the lowest levels of brain connectivity and engagement, especially in regions linked to memory, attention, and executive function. The more the AI handled, the less the brain had to do.

“Cognitive debt” and learning costs. The researchers coined the term “cognitive debt” to describe how short-term ease might lead to long-term drawbacks. The ChatGPT group became increasingly dependent on the AI with each session, to the point that by the third essay many would simply copy-and-paste ChatGPT’s output with minimal changes.

Diminished memory and ownership. ChatGPT users had trouble recalling or quoting what they themselves had written. In post-task interviews and tests, most participants in the AI group struggled to accurately quote from their own essays, whereas those in the brain-only or Google groups had a much easier time remembering their content. The ChatGPT group also reported a lower sense of authorship or personal ownership over their work.

“Soulless” essays and homogenized thinking. Two English teachers who blind-reviewed all the essays consistently described the ChatGPT-backed essays as well-structured and nearly flawless in grammar, but largely “soulless” and devoid of personal insight. Within the ChatGPT group, responses became very homogeneous, with less diversity of thought compared to the brain-only group’s essays.

Comparing initial vs. later use of AI. The study’s fourth session had some participants switch conditions to see how habits carried over:

Those who had been using ChatGPT struggled to write their fourth essay without any help. These participants showed weaker neural activity and less coordinated effort when the crutch of AI was removed. Many could barely remember the content of their previous essays or fell back on phrases they’d picked up from the AI, revealing how little deep learning had occurred.

Participants who originally wrote essays solo but were then allowed to use ChatGPT performed very differently. They approached the AI as a tool to augment their writing rather than replace it, integrating ChatGPT’s suggestions with their own knowledge. Their brains actually showed higher connectivity and memory recall during this assisted rewrite, similar to the engagement seen with the search-engine users. In other words, AI can enhance learning if used in the right part of the process, rather than right from the start.

Long-term educational implications. The authors and other experts are concerned about what habitual reliance on AI could mean for education and cognitive development over years. If younger users offload too much cognitive work to AI, they may weaken the neural pathways that help with memory and resilience. The phenomenon resembles what some have called “digital dementia,” where overreliance on digital aids (like GPS for navigation, Google for facts, etc) weakens natural memory and cognitive abilities over time. The researchers caution that we might be inadvertently training a generation to think less deeply unless we find ways to balance AI use with genuine mental effort.

But it’s not all doom and gloom. The fact that the brain-only writers who later used ChatGPT saw a boost in brain activity across all frequency bands suggests there is a way to have the best of both worlds. Let’s see what that could look like.

Avoiding cognitive pitfalls

The evidence from this study (and others like it) doesn’t mean we should shun ChatGPT or AI assistants. These tools are already ingrained into our society in a manner where underutilizing them comes at a detriment.

Instead, we should be looking for tactics around mindful, moderate use, much like how we’ve learned to manage other technologies that can be double-edged. Like setting app limits or taking breaks from doomscrolling, we might consider similar approaches to AI wellness in order to protect our precious little brains.

Don’t use AI as a starting point for every problem. There is value in struggling a bit on your own first. If you have an essay or problem to solve, try brainstorming or outlining with just your brain (and maybe even a pen and paper) before you ever turn to ChatGPT. This ensures you give your neural muscles a workout.

Stay actively involved when ChatGPT is working. If you do prompt ChatGPT for help, don’t just copy-paste the answer and consider the task done. Read the AI’s output critically and interact with it. This kind of active engagement will force your brain to crunch the information, lessening the memory/learning losses that come from passive consumption. Think of ChatGPT as a junior partner or tutor: he’ll propose ideas, you discuss and refine them.

Beware of “cognitive numbness.” One red flag is if using ChatGPT starts to feel too easy. If you notice you’re no longer challenged by tasks you used to wrestle with, or you’re accepting the AI’s answers without question, you’re likely experiencing some digital numbing (similar to how mindlessly scrolling social media can numb your attention). If you catch yourself doing this, it may be time to hit pause on the AI and tackle a task solo to re-engage the ol’ head muscle.

Structure your AI usage with deliberate limits. Taking a page from Apple’s Screen Time, try imposing concrete limits. Allow yourself a certain number of ChatGPT queries per study session, or a time window after which you put the AI away.

Balance convenience with challenge. Consciously decide which tasks to automate with AI and which to do the slow way. A bit of fast food is fine, but you always need some good solid protein and veggies. Choose to do some things the hard way, purely for the mental exercise. Research on neuroplasticity reminds us that mental effort is literally what grows and strengthens brain connections, much like muscles strengthening through resistance.

Use AI for enhancement, not replacement. When used mindfully, AI can actually improve learning outcomes (the MIT study’s optimistic flip-side ✨). Consider workflows where ChatGPT comes in at the editing or idea-expanding stage. For instance, write your code or essay draft, then ask ChatGPT to review it or suggest improvements. Such an approach can actually challenge you to think deeper. You might compare your solution to the AI’s, analyze differences, and learn new techniques, all of which keeps your mind engaged and growing.

There’s an analogy here to learning with calculators. Calculators are fantastic for speeding up math, but we still teach kids arithmetic by hand first so they develop number sense before letting them loose with a calculator. ChatGPT is probably similar (but on a much larger scale). We should be treating it as a powerful calculator for ideas and words that we should learn to wield only after we’ve trained our brains on the basics.

The future will belong to those who can synergize human and artificial intelligence effectively. With a bit of mindfulness, we can ensure that tools like ChatGPT remain a boon to our productivity and knowledge (without inadvertently making us intellectually complacent in the process).

Thanks for the post. I think the study may miss one sample. Extreme power users that will pivot quickly to long context training. Some of my more challenging cognitive exercises is preparing training material. Maybe I'm ahead of the AI deep use curve. But these systems will be trained by smart educators. Which will challenge both the AI generated content builders and consumers. Ive lived this for 8 months. Month 1-2. Full session isolation. Limited context windows. Month 3-4. Longer context windows. Mix of strict sessions and longer context windows. Shared memory starts to emerge. Next few months. Session walls start to bleed. Unfortunately so did the wonkiness. Fake citations in government. AI execs saying don't trust our systems. Blackmail. Hallucinations. Why now. Well power users are smart. But my crystal ball says as richer deeper and hopefully more ethical AI workflows emerge. AI models will emerge that provide deep cognitive challenge. Because I believe we'll start measuring a learner's cognitive growth against the cognitive strengths of an LLm. LinkedIn/in/bdmehlman

I like the way you balanced the study’s findings with practical advice. It’s a must-read for anyone using AI tools.